Shopify A/B Testing Services: The 2026 Merchant's Guide

- Shopify A/B testing services

- Shopify CRO

- Ecommerce optimisation

- Conversion rate optimisation

- Shopify agency

Launched

April, 2026

You’re checking Shopify analytics again. Sessions are steady. Paid traffic is landing. Your best products still get attention. But sales haven’t moved in a way that matches the effort.

That’s the point where many merchants start changing things at random. New banner. Different button colour. A stronger discount. A competitor copied. Then a redesign discussion starts, even though nobody can say which part of the current store holds performance back.

That’s where Shopify A/B testing services become useful. Not as a gadget, and not as a dashboard you log into once a month. The value is in the discipline. A good testing programme tells you what to change, why that change matters, how to validate it, and whether it improved the business or just made a graph look nicer for a week.

For UK merchants, that process has extra wrinkles that most guides skip. Some stores don’t have huge traffic, so classic test setups can take too long. Others have big seasonal spikes, which can distort results. And if your test setup ignores GDPR and PECR, you can create compliance problems while trying to improve conversion.

Your Shopify Store Has Traffic So Why Are Sales Flat

A familiar pattern looks like this. A store is bringing in visitors from Meta, Google, email, and repeat customers. The homepage is polished. Product pages are decent. Revenue grows in short bursts, then stalls again.

That stall is usually a conversion plateau.

What a plateau means

A plateau doesn’t mean the store is broken. It usually means the obvious fixes have already been made, and the remaining friction is harder to spot. It might be:

- Offer friction where shoppers don’t immediately understand value

- Page clarity issues where headlines and imagery don’t answer the buying question quickly enough

- Decision friction caused by too many choices, weak hierarchy, or distracting modules

- Cart hesitation where shipping, bundles, or trust cues arrive too late

Merchants often try to solve that with instinct. They say things like “the product page needs more energy” or “our competitor uses that layout”. Those instincts can help generate ideas, but they’re weak decision tools on their own.

Why copying competitors rarely works

Competitor-led changes feel safe because someone else already shipped them. But you don’t know what problem they were solving, what audience they were targeting, or whether the change even worked.

A store selling supplements to repeat buyers won’t behave like a fashion store with high first-time traffic. A gifting brand in Q4 won’t behave like a replenishment brand in February. Surface-level imitation hides those differences.

Most stores don’t need more opinions. They need a way to separate a strong idea from a costly assumption.

Professional testing work usually starts before the first experiment. It starts with diagnosis. That means reviewing analytics, behaviour patterns, and page-level friction before anyone touches the theme. If your store hasn’t had that kind of review, a proper Shopify UX audit often exposes the bottlenecks that redesign conversations miss.

Where testing changes the conversation

Once testing enters the picture, the brief changes from “make this page better” to something more useful:

- Which page has the biggest commercial upside?

- Which user hesitation appears often enough to matter?

- Which change is big enough to move results, but controlled enough to measure?

That shift is what turns flat sales into an optimisation problem rather than a guessing game.

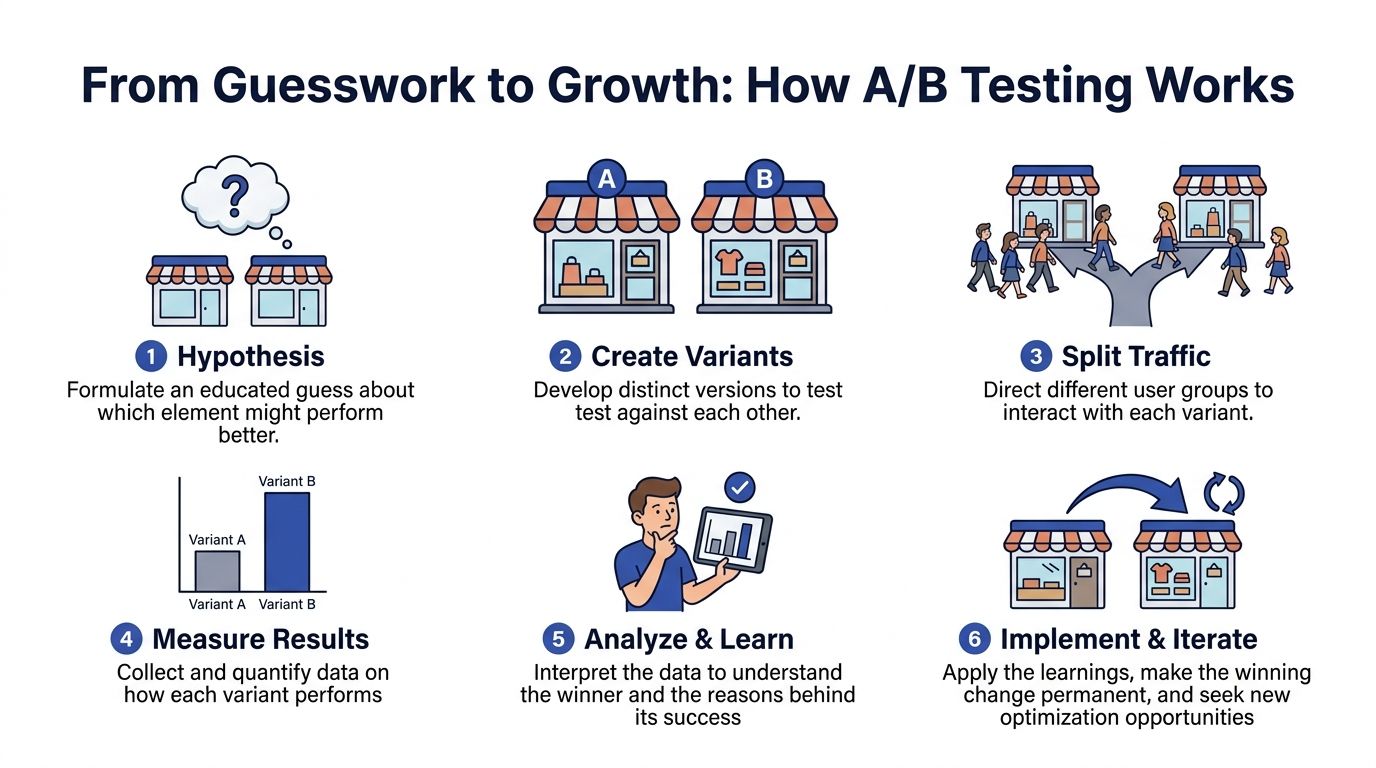

From Guesswork to Growth How A/B Testing Works

Think about two high street shop windows selling the same product. One highlights the product plainly. The other highlights the product with a stronger headline and a clearer offer. If half the passing footfall sees window A and the other half sees window B, you can judge which display gets more people through the door.

That’s A/B testing in simple terms. On Shopify, instead of windows, you test store elements that influence buying behaviour.

What merchants usually test

On Shopify stores, common test areas include:

- Headlines and hero messaging on homepage and collection pages

- Call-to-action copy such as add-to-cart phrasing

- Product imagery including image order and emphasis

- Promotional framing like free shipping thresholds or discount presentation

- Product page layout such as trust blocks, reviews, and bundle placement

These aren’t cosmetic choices. They change what a shopper notices first, what they understand, and whether they feel confident enough to buy.

What makes a test valid

A proper test needs more than two versions of a page. It needs enough data and enough time to tell whether the difference is real or just noise.

Case studies show that methodical testing can produce 20-30% increases in conversion rates, and valid tests should usually run for 2-4 weeks while tracking conversion rate, average order value, and revenue per visitor, according to Statsig’s Shopify A/B testing guidance.

That timing matters because buyer behaviour changes across the week. A result that looks strong after a few days can disappear once weekend traffic, payday behaviour, or returning visitors enter the mix.

Practical rule: if somebody declares a winner too early, they’re often measuring volatility, not improvement.

Statistical significance without the jargon

Merchants don’t need to become statisticians, but they do need a plain-English test for whether a result should be trusted.

Statistical significance means you’ve gathered enough evidence to believe the observed result probably wasn’t random. In practice, it’s the threshold that stops teams from rolling out changes because they “looked promising” for a short stretch.

That’s also why agencies that know what they’re doing are strict about test design. They’ll define one core hypothesis, keep variables controlled, and avoid changing three different things at once unless there’s a good reason.

If you want a solid non-technical reference point on process and setup, Figr’s guide to A/B testing best practices is a useful companion to what an agency should already be doing for you.

What A/B testing replaces

At its best, A/B testing replaces three bad habits:

- Preference-led design where internal taste drives decisions

- Panic changes after a bad week

- Endless redesign cycles with no proof they improved revenue

It gives you something better. A repeatable way to learn which changes help customers buy.

The Agency Workflow A Roadmap for Optimisation

A testing service isn’t just software access. The important aspect is in the sequence. Strong agencies follow a roadmap because random tests waste time, traffic, and development effort.

Step one starts with commercial diagnosis

Before any variant is built, the agency should inspect the store as a business system, not just a collection of pages.

That usually includes:

- Analytics review to find pages with strong traffic but weak monetisation

- Behavioural review using recordings, click patterns, and funnel drop-offs

- Offer review to see whether proposition, pricing logic, bundles, or shipping thresholds create hesitation

- Device split analysis because mobile friction often behaves differently from desktop friction

A useful hypothesis comes from tension in the data. For example, a product page may have strong interest but weak add-to-cart behaviour. Or the cart may convert, but average order value stays soft because cross-sell logic is poorly framed.

Then the hypothesis gets written properly

The agency should be able to state the test clearly. Not “we want to improve the PDP”, but something like:

If we make the value of the offer easier to understand near the purchase moment, more visitors should commit without needing to search for reassurance lower on the page.

That sounds simple, but it matters. Weak hypotheses produce vague tests. Vague tests produce vague learning.

Build quality matters more than most merchants realise

Once a test is approved, the variant has to be built in a way that doesn’t damage page performance or tracking quality. At this stage, junior setups often fall apart.

The implementation phase should cover:

- Variant production inside the theme or testing platform

- Event mapping for the primary and secondary metrics

- Quality assurance across devices, templates, and edge cases

- Consent and tracking review so measurement works within the store’s compliance setup

An agency that treats QA as a formality will eventually ship broken tests. That leads to misleading data, theme conflicts, and “results” nobody should trust.

Launching a test is the quiet part

Good teams don’t spend launch week celebrating. They monitor.

The early days of a test are for checking:

- whether traffic is splitting correctly

- whether orders are attributed correctly

- whether a script or app conflict is affecting one variant

- whether a page component breaks under a specific device or browser condition

This is also why disciplined teams don’t keep editing the variant after launch unless there’s a hard issue. Every unnecessary change muddies the result.

Low-traffic stores need a different playbook

This is one of the biggest gaps in generic CRO advice. A smaller UK Shopify store often can’t run endless classic fixed-sample tests and wait for clean outcomes.

For UK SMEs under 10k monthly visitors, agencies often prioritise Bayesian sequential testing over traditional fixed-sample methods. That approach has been shown to lift conversion rates by 18% for low-traffic stores, compared with 7% for traditional methods, according to Wisepops.

That doesn’t mean every small store should test everything. It means smaller stores need sharper prioritisation.

What changes for seasonal brands

Seasonal brands need a more selective roadmap. If traffic arrives in bursts, the agency should focus on tests that answer high-value questions quickly.

A practical seasonal workflow often looks like this:

- Prioritise high-impact pages such as top-selling PDPs or landing pages tied to paid campaigns

- Choose larger contrasts rather than tiny cosmetic tweaks

- Use pre-peak windows to validate ideas before major trading periods

- Document learnings carefully so next season starts from evidence, not memory

That’s the service merchants pay for. Not just test setup, but prioritisation, implementation discipline, interpretation, and adaptation to the store’s traffic reality.

Measuring Real Business Impact with the Right Metrics

A lot of merchants ask one question after a test ends: did conversion rate go up?

That’s understandable, but it’s incomplete. Conversion rate is important. It just isn’t enough on its own.

Why conversion rate can mislead you

A test can improve conversion rate while making the business worse. That happens when the variant nudges more people to buy, but lowers basket value enough that the revenue gain disappears.

The opposite can also happen. A variant might convert slightly fewer shoppers, but raise basket quality so much that total revenue per visitor improves.

That’s why serious Shopify A/B testing services report three commercial metrics together:

- Conversion rate

- Average order value

- Revenue per visitor

Those metrics work as a set. One tells you how often people buy. One tells you how much they spend. One tells you what each visitor is worth overall.

A simple way to read test outcomes

Here’s how to think about common result patterns:

| Outcome pattern | What it usually means | What to ask next |

|---|---|---|

| CR up, AOV flat | More shoppers are completing purchase | Is the gain stable across device and traffic source? |

| CR up, AOV down | The variant may be pulling in lower-value orders | Did discount framing or offer positioning change basket behaviour? |

| CR flat, AOV up | Fewer extra buyers, but stronger order economics | Did bundles, upsells, or shipping logic improve purchase quality? |

| CR down slightly, RPV up | The store may be filtering towards better transactions | Is total revenue improving enough to justify the trade-off? |

A weak agency report stops at “the variant won”. A useful report explains how it won and whether the gain is worth rolling out.

The metric that ties the story together

If I had to choose one metric to anchor commercial reporting, it would usually be revenue per visitor.

It forces discipline. You can’t hide behind pretty engagement lifts or a small conversion bump if each visitor is worth less after the change. RPV ties test outcomes back to the thing merchants care about, which is better monetisation of the traffic they already paid for.

A test result only matters if it improves the economics of the store, not just the optics of the dashboard.

Why AOV deserves more attention

Average order value often gets treated as a secondary bonus. It shouldn’t.

Some of the strongest gains in Shopify optimisation come from improving how products are packaged, compared, bundled, or framed at the point of purchase. That’s especially true for brands with loyal customers, multi-buy behaviour, or room to improve merchandising logic.

The publisher’s business context notes a significant AOV lift from a bundle creator test. That’s a good reminder that not every valuable experiment is about squeezing more people through checkout. Sometimes the better move is increasing order quality.

What to ask for in reporting

When reviewing an agency’s output, ask for:

- A primary KPI tied to the test hypothesis

- Secondary KPIs that protect against accidental downside

- Segment-level cuts where relevant, especially mobile versus desktop

- A clear rollout recommendation with business rationale

- A learning statement that can inform the next experiment

If reporting only celebrates wins and doesn’t explain trade-offs, the programme isn’t mature enough yet.

The Technology Behind Shopify A/B Testing

The tooling matters, but not in the way most merchants think. The wrong question is “which app should we install?” The better question is “which setup lets us test reliably on our store without corrupting speed, UX, or data?”

Third-party tools versus native Shopify options

Most stores will look at platforms such as VWO, Convert, Shoplift, or similar experimentation tools. These can be useful when you need visual editing, audience targeting, broader reporting, or specific test types.

Shopify has also introduced Rollouts, described in coverage of the winter 2026 release as a native way to stage theme changes to a percentage of traffic, which is particularly relevant for server-side style deployment control on storefront changes, as outlined by Convert’s write-up on Shopify Rollouts.

The practical difference is this:

- Client-side testing changes the page in the browser after it starts loading

- Server-side or rollout-led approaches decide the experience earlier, which can reduce visual flicker and deployment risk

Why client-side tests sometimes go wrong

Client-side tools are often easier to launch, but they can create issues if implementation is sloppy. The common problems are:

- visible flicker before the variant appears

- inconsistent event firing

- layout shifts that affect user behaviour

- conflicts with apps or custom theme logic

These aren’t edge cases. They directly affect result quality. A variant that loads awkwardly may underperform for technical reasons rather than commercial ones.

One technical choice has outsized impact

On Shopify, where you place the testing script matters. Direct integration into theme.liquid is often more reliable than dropping the tool in through Google Tag Manager.

According to Varify’s Shopify testing guidance, direct theme integration helps avoid script loading delays and tracking instability that can happen with GTM-based asynchronous loading on Shopify storefronts, making Varify’s explanation of Shopify A/B test implementation especially relevant when you’re reviewing an agency’s technical plan.

That’s a detail many merchants never ask about. They should. Bad script placement can make a careful test programme look unreliable.

Rollouts are useful, but not universal

Native rollout-style testing is appealing because it can de-risk larger theme changes. If you’re comparing a major navigation update, collection layout shift, or redesigned product template, traffic staging can be safer than pushing a full launch and hoping for the best.

But native methods aren’t automatically enough for every CRO programme. Some teams still need:

- stronger audience segmentation

- easier variant management

- richer reporting

- more flexible experimentation across specific page elements

That’s why many agencies combine platform-native capabilities with external tooling and custom implementation.

The hidden layer is QA

Testing software can tell you a variant won. It can’t guarantee the variant rendered correctly in every state.

That’s why technical QA should include visual checks before and during the experiment. If you want to understand the broader category of tooling used for that kind of safeguard, this overview of visual regression testing tools is helpful context.

For more complex storefronts, especially custom themes and Plus builds, testing architecture needs to be discussed alongside broader development decisions. That’s where a store with a custom stack often benefits from a stronger Shopify Plus development foundation before the experimentation layer scales.

One agency, one platform, or a mixed stack

There isn’t a universal tool stack. The right setup depends on the store’s theme complexity, traffic, technical debt, consent setup, and the kinds of experiments you want to run.

One option in the market is Grumspot, which the publisher notes uses A/B testing as part of broader CRO and has co-developed a Shopify app for price testing. That’s one example of an agency-plus-tool model. Other merchants will prefer a pure software route with internal resources, or an agency using platforms like VWO or Convert on their behalf.

The key is not the logo on the dashboard. It’s whether the setup produces trustworthy data without damaging the storefront experience.

Evaluating Agency Pricing and Finding the Right Partner

Pricing for Shopify A/B testing services can look confusing because agencies package the work in different ways. That doesn’t mean the offers are equivalent.

You’re not only buying test launches. You’re buying prioritisation, implementation quality, analysis, and risk management. In the UK, that last part includes GDPR and PECR.

Comparing common pricing models

Here’s the practical difference between the models you’ll usually see.

| Model | Best For | Pros | Cons |

|---|---|---|---|

| One-off project | Stores that want an initial test sprint or audit-led pilot | Clear scope, lower commitment, useful for proving value | Often stops before a real experimentation rhythm forms |

| Monthly retainer | Brands that want ongoing optimisation and faster iteration | Continuous roadmap, easier prioritisation, better learning accumulation | Needs internal buy-in and a longer-term view |

| Performance-based fee | Merchants that want commercial alignment | Incentives can feel attractive when goals are shared | Measurement disputes can appear if attribution and baseline rules aren’t clear |

What a cheap proposal often leaves out

Low-cost testing offers usually cut corners in one of four places:

- Research depth before choosing what to test

- Development quality during variant setup

- Reporting depth after the test ends

- Compliance process around tracking and consent

That last point matters more than many merchants realise. In the UK, A/B tests are subject to GDPR and PECR, and a meaningful share of ecommerce tests are non-compliant because explicit consent for tracking isn’t handled. Delays caused by regulatory uncertainty are common, which is why Fermat’s discussion of Shopify A/B testing and compliance is worth reading before you appoint a partner.

Questions worth asking before you sign

A serious agency should answer these cleanly.

Ask how they handle lower or seasonal traffic

If your store isn’t high volume all year, ask what methodology they use when classic testing is slow or underpowered. The answer should involve prioritisation logic, test sizing, and a realistic explanation of what can and can’t be validated.

Ask to see sample reporting

You want more than screenshots of uplift headlines. Ask whether reports include:

- RPV alongside CR

- AOV analysis

- segment breakdowns

- clear rollout recommendations

- documented learnings from inconclusive tests

If a report only celebrates wins, you won’t learn much from the programme.

Ask about compliance workflow

For UK merchants, this is essential. Ask:

- how variant allocation works with consent management

- which events are tracked before and after consent

- how experiment data is retained

- how legal and analytics requirements are reconciled

A vague answer here is a warning sign.

The right agency doesn’t treat compliance as a blocker. It treats it as a design constraint that needs to be solved.

Ask what happens after a winner is found

Some providers stop at the result. Better partners handle rollout, post-test QA, and the next test backlog. That’s where real compounding happens.

The fit question matters more than the headline fee

A smaller merchant may start with a focused project. A scaling brand with paid traffic, merchandising complexity, and a full product roadmap usually benefits more from a retainer model. Neither is automatically right.

What matters is whether the partner can work at the level your store needs. If you’re comparing broader optimisation partners rather than pure experiment vendors, this breakdown of what a Shopify CRO agency typically does can help frame the evaluation.

The strongest partner is rarely the one with the flashiest test screenshots. It’s the one that can explain trade-offs clearly, work within your store’s traffic reality, and protect both data quality and compliance while improving revenue.

Frequently Asked Questions

Do I need an agency if I can buy a testing tool myself

Not always. A tool is enough if your team can handle research, test design, implementation, QA, and analysis without slipping into guesswork. Most merchants discover the bottleneck isn’t launching a variant. It’s choosing the right hypothesis and trusting the result.

What should I test first on a Shopify store

Start where commercial upside and clarity meet. Product pages, offer framing, shipping thresholds, bundles, and key landing pages usually beat low-impact cosmetic tweaks. The best first test isn’t the easiest one to launch. It’s the one most likely to remove buying friction.

Can small stores still benefit from testing

Yes, but they need a stricter approach. Smaller stores should avoid lots of tiny tests on minor details. They usually benefit more from bigger hypothesis-led changes, careful prioritisation, and methods suited to lower traffic rather than rigid fixed-sample routines.

How long should a test run

Long enough to capture normal buying behaviour and avoid reacting to early noise. Rushing to a decision is one of the easiest ways to create false winners. Agencies that are too eager to call results usually create more confusion than insight.

Is A/B testing legal under UK rules

Yes, but the setup has to respect UK privacy requirements. That means consent, tracking design, and data handling need to be thought through before launch. Compliance shouldn’t be bolted on after the experiment is live.

Should I care more about conversion rate or revenue

Revenue. More precisely, the relationship between conversion rate, average order value, and revenue per visitor. A test that lifts conversion but weakens order value may not help the business. The point is better economics, not just a prettier KPI.

What’s a sign an agency knows what it’s doing

They’ll talk as much about what not to test as what to test. They’ll ask about traffic quality, seasonality, implementation constraints, and consent setup before promising wins. That’s usually a good sign the work is grounded in reality.

If you want a practical partner to review your store, identify what’s worth testing, and build a structured CRO roadmap around Shopify A/B testing services, take a look at Grumspot.

Let's build something together

If you like what you saw, let's jump on a quick call and discuss your project

Related posts

Check out some similar posts.

- outsource Shopify development

Ready to outsource Shopify development? Our 2026 playbook covers finding partners, vetting, pricing,...

Read more

- Shopify monthly development plan

Learn how a Shopify monthly development plan works to scale your store. Our 2026 guide covers pricin...

Read more

- Shopify performance optimization

Boost your Shopify store's speed & sales with our Shopify performance optimization guide. Audit, fix...

Read more

- ecommerce website design Shopify

Ecommerce website design Shopify - Master ecommerce website design Shopify with our 2026 playbook. G...

Read more